mirror of

https://github.com/danny-avila/LibreChat.git

synced 2026-04-06 16:12:30 +02:00

🍎 feat: Apple MLX as Known Endpoint (#2580)

* add integration with Apple MLX * fix: apple icon + image mkd link --------- Co-authored-by: “Extremys” <“Extremys@email.com”> Co-authored-by: Danny Avila <danny@librechat.ai>

This commit is contained in:

parent

0e50c07e3f

commit

d21a05606e

8 changed files with 77 additions and 0 deletions

|

|

@ -46,6 +46,15 @@ const exampleConfig = {

|

|||

fetch: false,

|

||||

},

|

||||

},

|

||||

{

|

||||

name: 'MLX',

|

||||

apiKey: 'user_provided',

|

||||

baseURL: 'http://localhost:8080/v1/',

|

||||

models: {

|

||||

default: ['Meta-Llama-3-8B-Instruct-4bit'],

|

||||

fetch: false,

|

||||

},

|

||||

},

|

||||

],

|

||||

},

|

||||

};

|

||||

|

|

|

|||

BIN

client/public/assets/mlx.png

Normal file

BIN

client/public/assets/mlx.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 82 KiB |

|

|

@ -9,6 +9,7 @@ const knownEndpointAssets = {

|

|||

[KnownEndpoints.fireworks]: '/assets/fireworks.png',

|

||||

[KnownEndpoints.groq]: '/assets/groq.png',

|

||||

[KnownEndpoints.mistral]: '/assets/mistral.png',

|

||||

[KnownEndpoints.mlx]: '/assets/mlx.png',

|

||||

[KnownEndpoints.ollama]: '/assets/ollama.png',

|

||||

[KnownEndpoints.openrouter]: '/assets/openrouter.png',

|

||||

[KnownEndpoints.perplexity]: '/assets/perplexity.png',

|

||||

|

|

|

|||

|

|

@ -271,6 +271,40 @@ Some of the endpoints are marked as **Known,** which means they might have speci

|

|||

|

||||

|

||||

|

||||

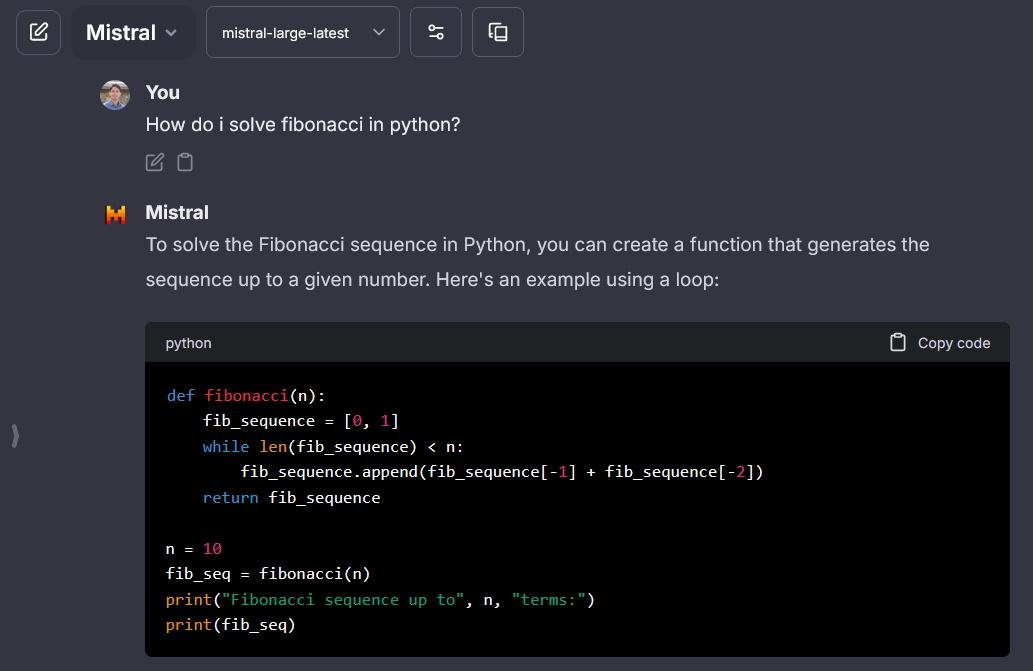

## Apple MLX

|

||||

> MLX API key: ignored - [MLX OpenAI Compatibility](https://github.com/ml-explore/mlx-examples/blob/main/llms/mlx_lm/SERVER.md)

|

||||

|

||||

**Notes:**

|

||||

|

||||

- **Known:** icon provided.

|

||||

|

||||

- API is mostly strict with unrecognized parameters.

|

||||

- Support only one model at a time, otherwise you'll need to run a different endpoint with a different `baseURL`.

|

||||

|

||||

```yaml

|

||||

- name: "MLX"

|

||||

apiKey: "mlx"

|

||||

baseURL: "http://localhost:8080/v1/"

|

||||

models:

|

||||

default: [

|

||||

"Meta-Llama-3-8B-Instruct-4bit"

|

||||

]

|

||||

fetch: false # fetching list of models is not supported

|

||||

titleConvo: true

|

||||

titleModel: "current_model"

|

||||

summarize: false

|

||||

summaryModel: "current_model"

|

||||

forcePrompt: false

|

||||

modelDisplayLabel: "Apple MLX"

|

||||

addParams:

|

||||

max_tokens: 2000

|

||||

"stop": [

|

||||

"<|eot_id|>"

|

||||

]

|

||||

```

|

||||

|

||||

|

||||

|

||||

## Ollama

|

||||

> Ollama API key: Required but ignored - [Ollama OpenAI Compatibility](https://github.com/ollama/ollama/blob/main/docs/openai.md)

|

||||

|

||||

|

|

|

|||

|

|

@ -1213,6 +1213,7 @@ Each endpoint in the `custom` array should have the following structure:

|

|||

- "Perplexity"

|

||||

- "together.ai"

|

||||

- "Ollama"

|

||||

- "MLX"

|

||||

|

||||

### **models**

|

||||

|

||||

|

|

|

|||

30

docs/install/configuration/mlx.md

Normal file

30

docs/install/configuration/mlx.md

Normal file

|

|

@ -0,0 +1,30 @@

|

|||

---

|

||||

title: Apple MLX

|

||||

description: Using LibreChat with Apple MLX

|

||||

weight: -6

|

||||

---

|

||||

## MLX

|

||||

Use [MLX](https://ml-explore.github.io/mlx/build/html/index.html) for

|

||||

|

||||

* Running large language models on local Apple Silicon hardware (M1, M2, M3) ARM with unified CPU/GPU memory)

|

||||

|

||||

|

||||

### 1. Install MLX on MacOS

|

||||

#### Mac MX series only

|

||||

MLX supports GPU acceleration on Apple Metal backend via `mlx-lm` Python package. Follow Instructions at [Install `mlx-lm` package](https://github.com/ml-explore/mlx-examples/tree/main/llms)

|

||||

|

||||

|

||||

### 2. Load Models with MLX

|

||||

MLX supports common HuggingFace models directly, but it's recommended to use converted and tested quantized models (depending on your hardware capability) provided by the [mlx-community](https://huggingface.co/mlx-community).

|

||||

|

||||

Follow Instructions at [Install `mlx-lm` package](https://github.com/ml-explore/mlx-examples/tree/main/llms)

|

||||

|

||||

1. Browse the available models [HuggingFace](https://huggingface.co/models?search=mlx-community)

|

||||

2. Copy the text from the model page `<author>/<model_id>` (ex: `mlx-community/Meta-Llama-3-8B-Instruct-4bit`)

|

||||

3. Check model size. Models that can run in CPU/GPU unified memory perform the best.

|

||||

4. Follow the instructions to launch the model server [Run OpenAI Compatible Server Locally](https://github.com/ml-explore/mlx-examples/blob/main/llms/mlx_lm/SERVER.md)

|

||||

|

||||

```mlx_lm.server --model <author>/<model_id>```

|

||||

|

||||

### 3. Configure LibreChat

|

||||

Use `librechat.yaml` [Configuration file (guide here)](./ai_endpoints.md) to add MLX as a separate endpoint, an example with Llama-3 is provided.

|

||||

|

|

@ -24,6 +24,7 @@ weight: 1

|

|||

* 🤖 [AI Setup](./configuration/ai_setup.md)

|

||||

* 🚅 [LiteLLM](./configuration/litellm.md)

|

||||

* 🦙 [Ollama](./configuration/ollama.md)

|

||||

* 🍎 [Apple MLX](./configuration/mlx.md)

|

||||

* 💸 [Free AI APIs](./configuration/free_ai_apis.md)

|

||||

* 🛂 [Authentication System](./configuration/user_auth_system.md)

|

||||

* 🍃 [Online MongoDB](./configuration/mongodb.md)

|

||||

|

|

|

|||

|

|

@ -299,6 +299,7 @@ export enum KnownEndpoints {

|

|||

fireworks = 'fireworks',

|

||||

groq = 'groq',

|

||||

mistral = 'mistral',

|

||||

mlx = 'mlx',

|

||||

ollama = 'ollama',

|

||||

openrouter = 'openrouter',

|

||||

perplexity = 'perplexity',

|

||||

|

|

|

|||

Loading…

Add table

Add a link

Reference in a new issue